Let’s be real—artificial intelligence isn’t “coming soon.” It’s already in your workplace.

But here’s the twist: it might be there without your approval.

Across the country, employees are using tools like ChatGPT, Copilot, and Claude to get work done faster. They aren’t waiting for IT sign-offs or policy updates. They’re finding shortcuts, and while it boosts productivity, it quietly opens the door to one of the fastest-growing cybersecurity risks in business today: Shadow AI.

The Rise of Shadow AI (And Why It Matters)

Recent studies reveal just how widespread this quiet AI revolution has become. A survey from Cybernews found that 59% of U.S. employees use unapproved AI tools and hide it from their managers. It’s a widespread trend driven by a need for efficiency, with nearly two-thirds of employees paying out of pocket for these tools (Inc, Gusto).

The problem is, this pursuit of speed often comes at the expense of security. According to Anagram, 58% of employees have entered sensitive data like financial records or client details into these public tools, and 40% admit they’d knowingly break the rules if it meant completing a task faster.

If your people are doing this, it’s not because they’re careless—it’s because they’re under pressure to get results. They just don’t realize how much company data they’re exposing in the process.

“We’re on a Paid Plan—So We’re Safe… Right?”

Not necessarily. There’s a common misconception that paying for an AI tool means your data is secure. The reality is that even paid platforms can quietly store or train on your data unless you explicitly dig into the settings and opt out.

For example, Anthropic recently updated its terms for consumer plans (Claude Free, Pro, and Max) to state that inputs can be stored for up to five years and used to train future models unless users manually change their privacy settings (Anthropic). While enterprise plans are excluded, many small and midsize businesses don’t use them, meaning even paid subscribers could be unintentionally sharing sensitive data.

And Anthropic isn’t alone. Companies like Zoom, Rev, and Meta have also modified their terms to allow AI training on customer content (Business Insider). The FTC has even warned that changing terms after the fact to use customer data may be considered an “unfair or deceptive” practice (FTC).

If your team is using public AI tools for client deliverables or internal reports, your organization could already be leaking confidential information, one prompt at a time.

So What Is Secure AI?

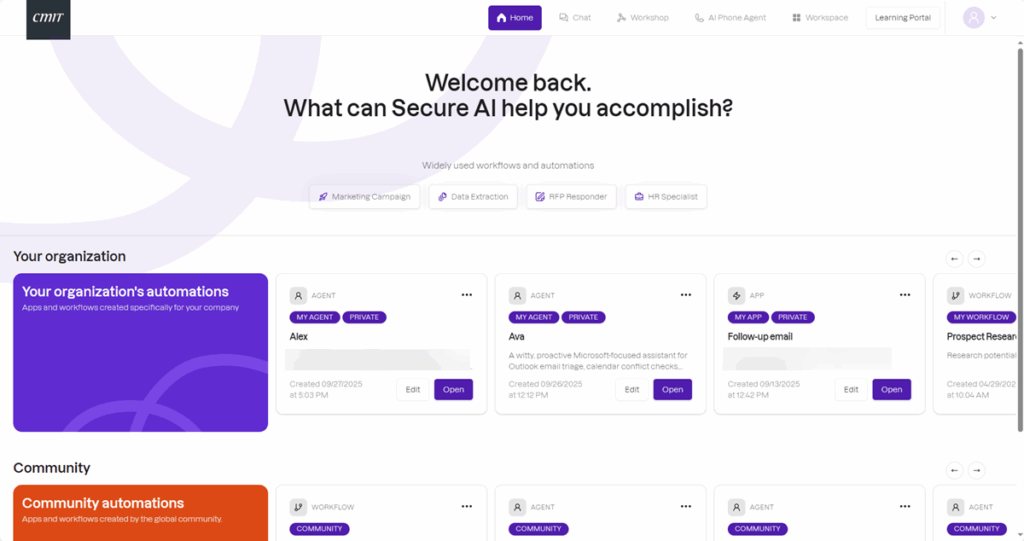

This is where a private, managed platform makes a difference. Secure AI by CMIT Solutions of Fort Lauderdale is a private AI platform designed specifically for small and midsize businesses that need the power of artificial intelligence without the security risks.

It gives your team a secure environment to chat, generate content, analyze data, and automate workflows, all under your organization’s control. It provides enterprise-grade data protection and compliance controls while giving you access to the most powerful AI models.

What Makes CMIT Fort Lauderdale’s Secure AI Different?

CMIT Fort Lauderdale’s Secure AI was built for businesses that can’t afford to take chances with data. It delivers the productivity of AI without sacrificing privacy, making it ideal for industries where security isn’t optional—like legal, financial, healthcare, and logistics.

Here’s what sets it apart:

- It never trains on your data. Ever. Your information remains completely private.

- All content is protected with strict data privacy controls.

- It operates in a secure, U.S.-based cloud environment.

- It’s compliant with leading data protection standards, including HIPAA, GDPR, and SOC 2.

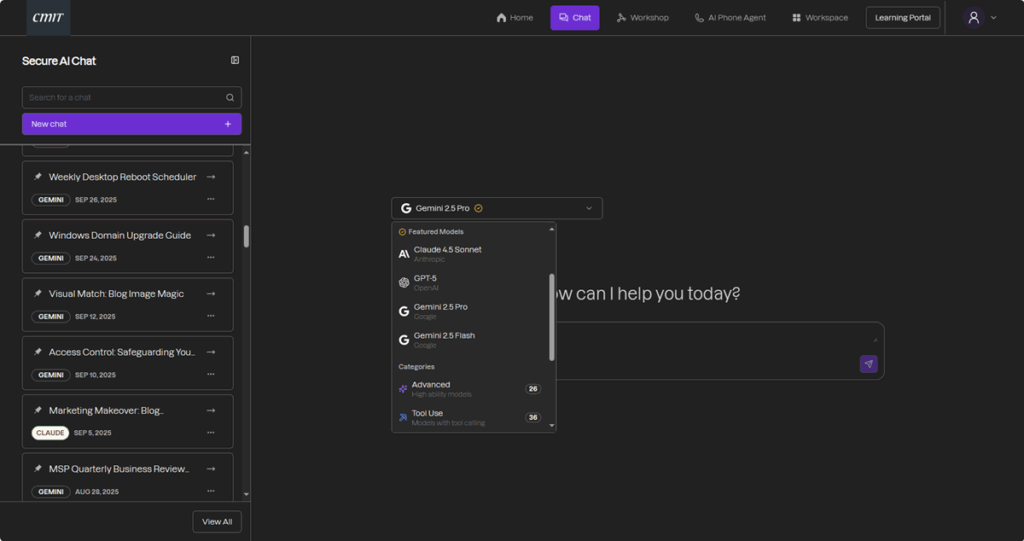

And you aren’t limited to a single AI engine. Inside your private workspace, you can safely access a range of today’s leading large language models, including ChatGPT (4, 4o, and 5), Google Gemini, Anthropic Claude, and Meta Llama, all contained within an encrypted environment.

This means your teams can brainstorm, summarize, create visuals, or process complex data using whichever AI best fits the job, all while maintaining strict data isolation. It also integrates with the tools your business already runs on—from Microsoft 365 and Google Workspace to Salesforce and HubSpot—so AI fits into your existing workflows, not the other way around.

The Secure AI dashboard gives teams a private, compliant environment to harness AI safely.

Why This Matters to Your Business

If your employees are already using AI—and statistically, they are—then sensitive data could be leaving your organization without anyone realizing it. That’s not hype; it’s data.

A secure AI platform closes that gap between innovation and exposure. It helps your team work faster without cutting corners, giving you both progress and protection. Because protecting your data shouldn’t mean saying “no” to AI. It means saying “yes” to using it the right way.

Secure AI vs. Public AI: What Really Changes?

If you’ve ever wondered what the actual difference is between public AI tools and a secure platform, it comes down to control and confidentiality.

Secure AI lets users select from multiple large language models — such as ChatGPT, Claude, Gemini, and more — organized by capability, including advanced reasoning and image generation.

This isn’t about fear; it’s about clarity. For most businesses, the difference isn’t academic; it’s operational. It’s the difference between moving forward with confidence and just hoping for the best.

The Bottom Line

AI itself isn’t the problem—unsecured AI is.

If your organization wants to leverage the power of automation without the risk of breaches, leaks, or compliance violations, a secure AI platform delivers the best of both worlds: productivity for your people and peace of mind for you.

It’s private, it’s encrypted, and it ensures your data never becomes someone else’s training material.

Ready to use AI safely? Schedule your Secure AI consultation today and see how your business can innovate with confidence.