As a business leader preparing for your busiest season, you’re focused on inventory, staffing, and sales, but there’s an invisible threat growing more sophisticated by the day:

This means all businesses face these AI-driven risks, but small and mid-sized businesses (SMBs) are especially prime targets, as they often lack the extensive security infrastructure of larger corporations or access to dedicated cybersecurity services.

To address these risks, this article explains why a practical, human-centered strategy is more effective than focusing only on complex technological solutions. Let’s begin by exploring how AI influences cyberattacks.

How Does AI Affect Cyberattacks?

To understand this shift in cyberattacks, we first need to ask, “How has AI impacted businesses?”

AI has changed how businesses operate by increasing speed and automation — but it has also expanded cyber risk.

- Cybercriminals now use AI to create more convincing scams and impersonation attacks like phishing emails, fake invoices, and deepfake voice calls — requiring businesses to strengthen verification processes, employee awareness, and security controls to protect operations.

These AI-driven tactics:

- Are harder to spot — especially during busy periods like the holidays.

- Exploit trust and urgency.

So, where do 90% of all cyber incidents begin?

Over 90% of cyber incidents — including data breaches — begin with phishing attacks, which exploit human error through deceptive emails to trick users into clicking malicious links, downloading malware, or revealing sensitive information like passwords — making people the primary entry point for criminals.

Next, let’s examine why the unique psychological and operational pressures of the holiday season increase vulnerability across teams.

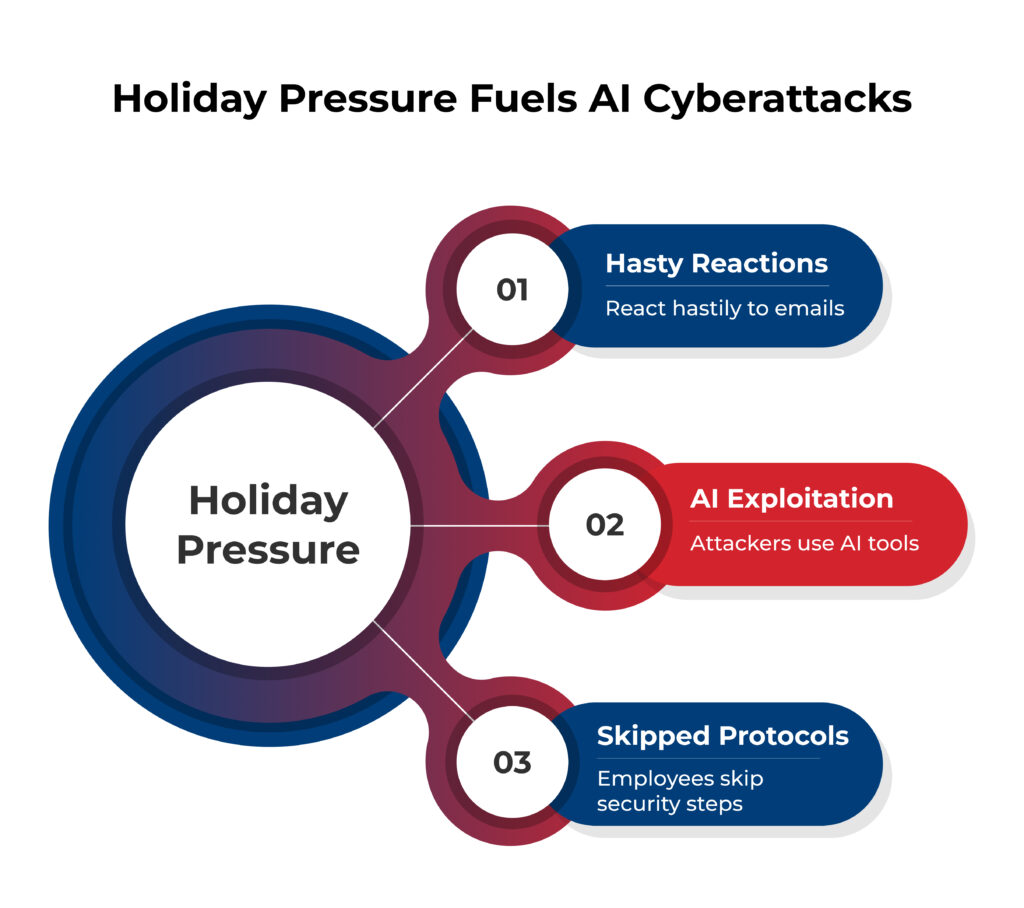

Why Holiday Pressure Creates Prime Opportunities for Attackers

During the holiday season, companies function under immense operational pressure, with deadlines looming and resources stretched to their limits.

- Staff are often stretched thin, customer service teams become overloaded, and departments focus intensely on speed over security to meet seasonal demands.

Cybercriminals deliberately capitalize on these predictable, high-pressure conditions — knowing that stressed employees are more likely to skip standard security protocols or react hastily to an urgent-looking email.

And this vulnerability is exploited through social engineering — an attack method designed to manipulate human behavior to achieve malicious goals like transferring money or sharing sensitive data.

- Modern attackers have supercharged this threat by using AI to craft messages that perfectly mirror legitimate business correspondence.

These AI-powered campaigns frequently target employees in finance, HR, and leadership roles — individuals with direct access to sensitive systems and payment authorization.

Attackers also leverage AI to create deepfakes, deceptive audio or video files, to launch convincing social engineering attacks. This technology allows criminals to efficiently identify and pursue the highest-value targets within an organization — making their efforts far more dangerous.

This combination of holiday pressure and advanced AI tools creates the perfect storm for AI-driven cyber threats. And to truly understand the risk, it is essential to see how these threats play out in real-world business scenarios — our next area of exploration.

Also Read: Boost Cybersecurity During the Holidays

Identifying Deceptive AI Scenarios Targeting Your Business

Let’s look at two practical scenarios to ground these threats in reality.

Scenario 1: AI Invoice Scam

- The Attack: Your accounts payable clerk receives what looks like a perfect invoice from a regular supplier — it has the correct letterhead, familiar order numbers, and typical formatting, making it appear entirely legitimate. However, the only detail changed is the bank account information, now routing funds directly to an attacker.

- Why It Works: This is a form of AI-automated and personalized phishing. Using Generative AI, attackers can scour your public data — such as past communications and vendor websites — to create these hyper-personalized forgeries. Unlike the generic phishing scams of the past, these AI-driven cyber threats mimic your actual vendor relationships with frightening accuracy — exploiting established trust.

Scenario 2: Deepfake Voice Call Scam

- The Attack: An employee receives a panicked, urgent call from what sounds exactly like their superior. The cloned voice, using familiar cadence and phrases replicated from public sources, demands an immediate fund transfer or purchase — creating immense pressure to bypass standard checks.

- Why It Works: This executive impersonation works because AI voice cloning technology can create a replica so realistic it is virtually indistinguishable from the real person. Attackers can use Generative AI to harvest voice samples from your business’s public videos, podcasts, or social media posts. The goal of this AI-driven social engineering is to manufacture such urgency that an employee feels compelled to override procedures — effectively bypassing your financial controls.

These scenarios show that while the attacks are technological, they succeed by exploiting human trust and pressure. This highlights a critical vulnerability that can’t be patched with software alone, paving the way for our next focus — building your human firewall.

Your Human Firewall is the Strongest Defense

- First, implement the “Two-Channel Rule.” Require all payment changes to be verified through a secondary communication channel, not just email. This means calling the vendor using a phone number from your official records — not one provided in a potentially fraudulent email.

- Next, establish a verbal verification code — a simple, changing phrase known only to key personnel. Anyone requesting sensitive actions must provide this code, turning it into a straightforward security checkpoint.

- Now, create your verification playbook. This is a simple, one-page list of critical verification steps — from checking payment details to confirming unusual requests. Post it in break rooms and near workstations so it is always visible.

- Finally, foster a culture of healthy skepticism. During employee training and awareness sessions, emphasize the core principle: “We’d rather delay a transaction than lose everything.” Create a blame-free environment and explicitly reward employees who catch potential scams — encouraging everyone to speak up.

This focus on employee training and awareness is crucial, as this trains your team to spot red flags like unusual requests or urgency to bypass procedures — tactics common to sophisticated AI-driven cyber threats.

- In essence, building this human firewall by creating a verification culture is a proactive, multi-layered approach. It integrates human oversight to form a security layer that sophisticated AI-driven deception can’t easily penetrate.

While this empowered human firewall is your most critical defense layer, it becomes nearly impenetrable when reinforced by a few foundational technical safety nets — let’s explore this next.

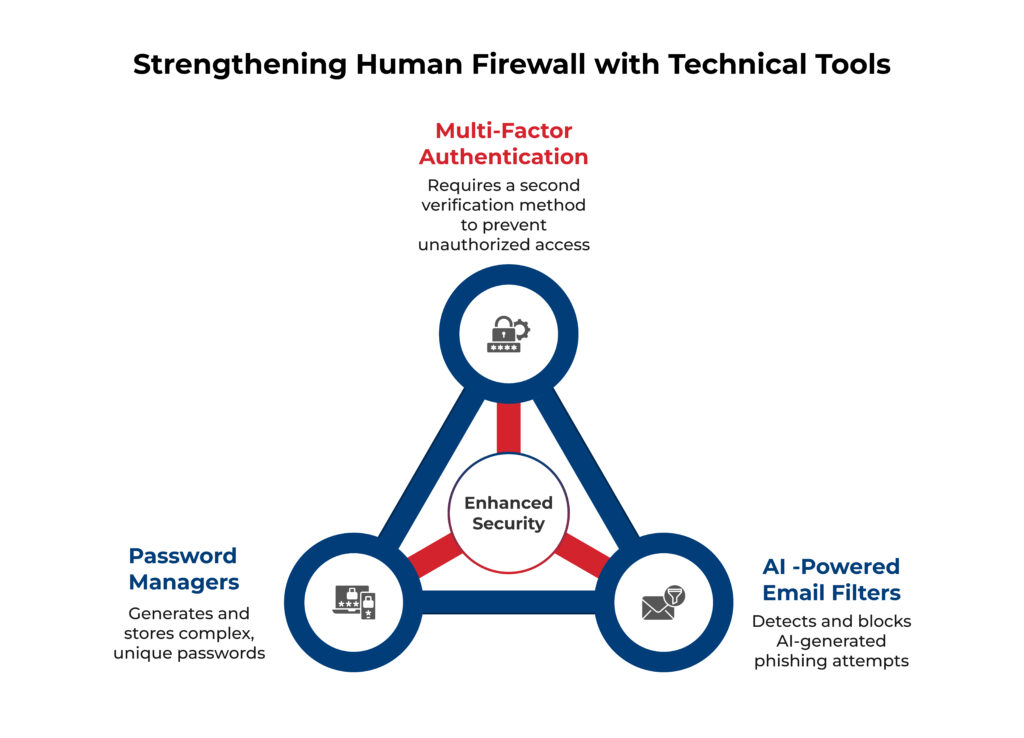

Foundational Security Tools to Support Your People

Here’s how technical tools strengthen your human firewall by automating key defenses:

- First, mandate Multi-Factor Authentication (MFA) across all business accounts. This is critical because MFA stops AI-enhanced credential theft and reuse attacks by requiring a second form of verification — even if an attacker has the correct password.

- Next, deploy AI-powered email filters to analyze message patterns and detect sophisticated threats. These systems work by identifying and blocking AI-generated phishing attempts that traditional spam filters might miss — protecting your team from deceptive messages.

- Finally, implement password managers to generate and store complex, unique passwords for every account. This eliminates the risk of weak or reused passwords, which are a common vulnerability exploited by attackers.

Together, MFA, AI-powered email filters, and password managers form a multi-layered technical defense that supports your human firewall — reducing the number of AI-driven cyber threats your team must face directly.

Take Action Now to Secure Your Holiday Season

By empowering your employees and establishing a clear verification playbook, you turn your team into your most impenetrable defense layer against AI-powered cyber threats.

Ready to protect your business this holiday season? At CMIT Solutions, Silver Spring, we provide comprehensive IT services and expert guidance on implementing proactive defenses, helping you secure your business operations in this fast-moving environment.

Connect with us today — join the security huddle!